The central argument of MacAskill’s bookWhat We Owe The Future is:

There could be a lot of people in the future.

Possible future people deserve moral consideration just as much as anyone else.

Therefore, a lot of moral consideration should be given to possible future people.

But maybe morality doesn’t apply to possible future people. Maybe we only need to give moral consideration to people who actually already exist.

Chapter 8 of What We Owe The Future addresses this question. It is called Is It Good to Make Happy People?

In this post I’ll represent the arguments MacAskill makes for why it is good to make happy people. Then I’ll say why I think he’s wrong. Then I’ll say why I think it’s good to make happy people anyway.

Population ethics

This is Derek Parfit.

Derek was a moral philosopher at Oxford. In 1984 he wrote a book called Reasons and Persons, where he introduced an idea he called the repugnant conclusion.

The argument goes something like this:

Imagine a bunch of people with different levels of happiness.

The orange person is very happy with his life. The yellow person is kinda happy with their life. The green person is neutral about their life, so they wouldn’t care if they died. The blue person is miserable and would prefer they were dead.

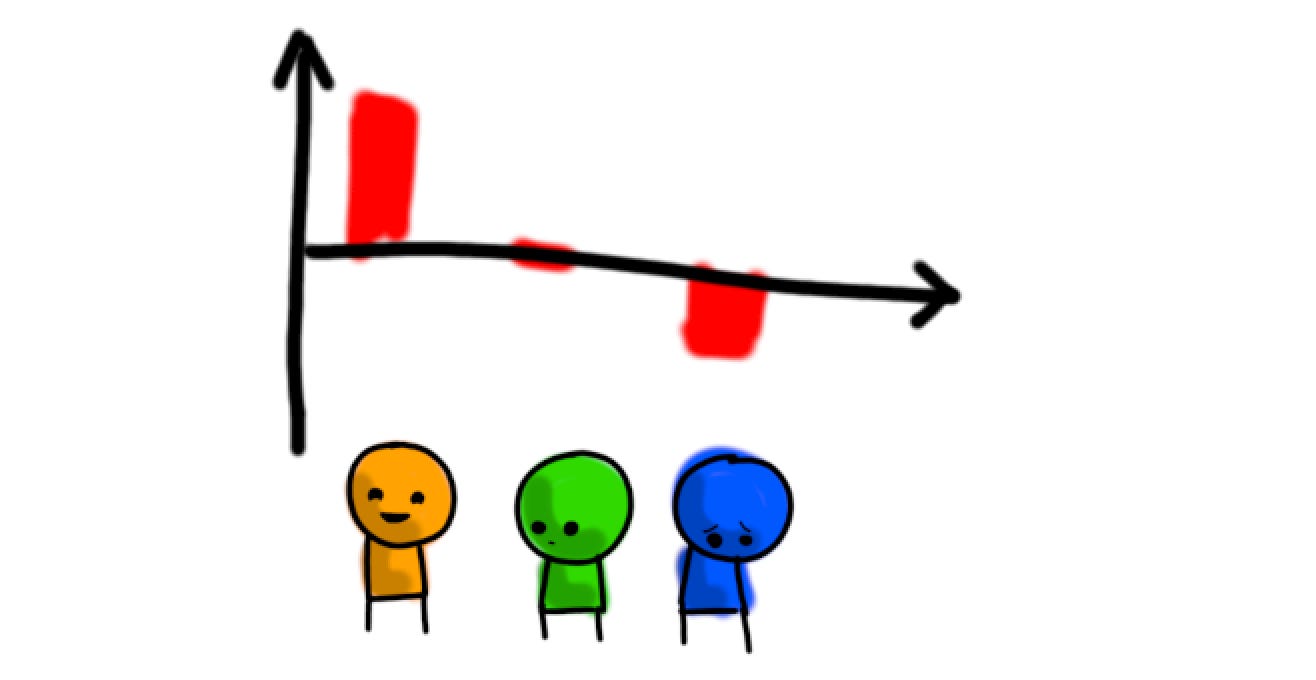

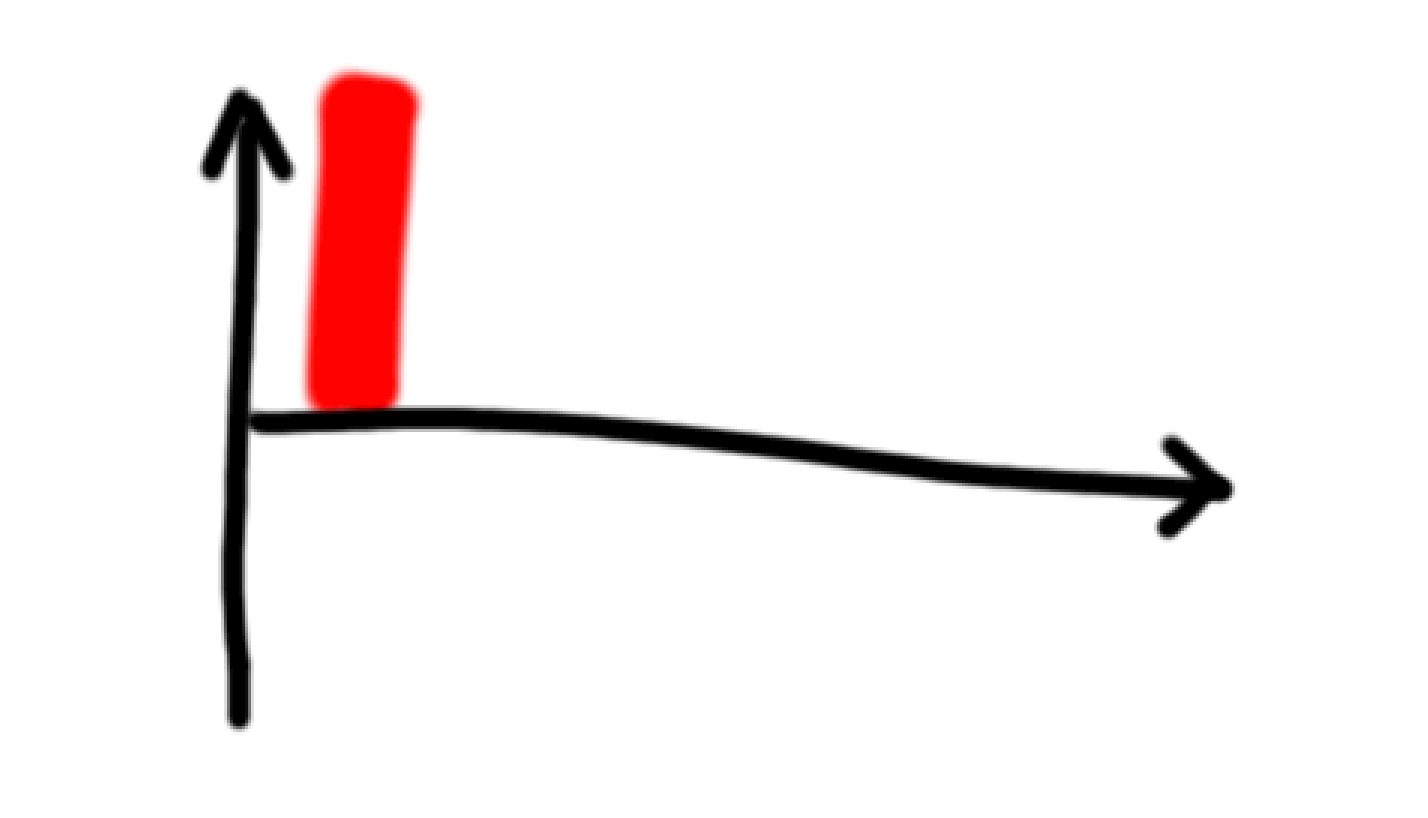

We can plot these different levels of happiness with a bar chart, where the x-axis represents the point of neutrality between living and dying.

When there are several people at the same happiness level, we can remove the gaps between the bars. So here’s a world with a few very happy people:

And here’s a world with a lot of kinda happy people:

Parfit used these kinds of diagrams in Reasons and Persons to illustrate the repugnant conclusion.

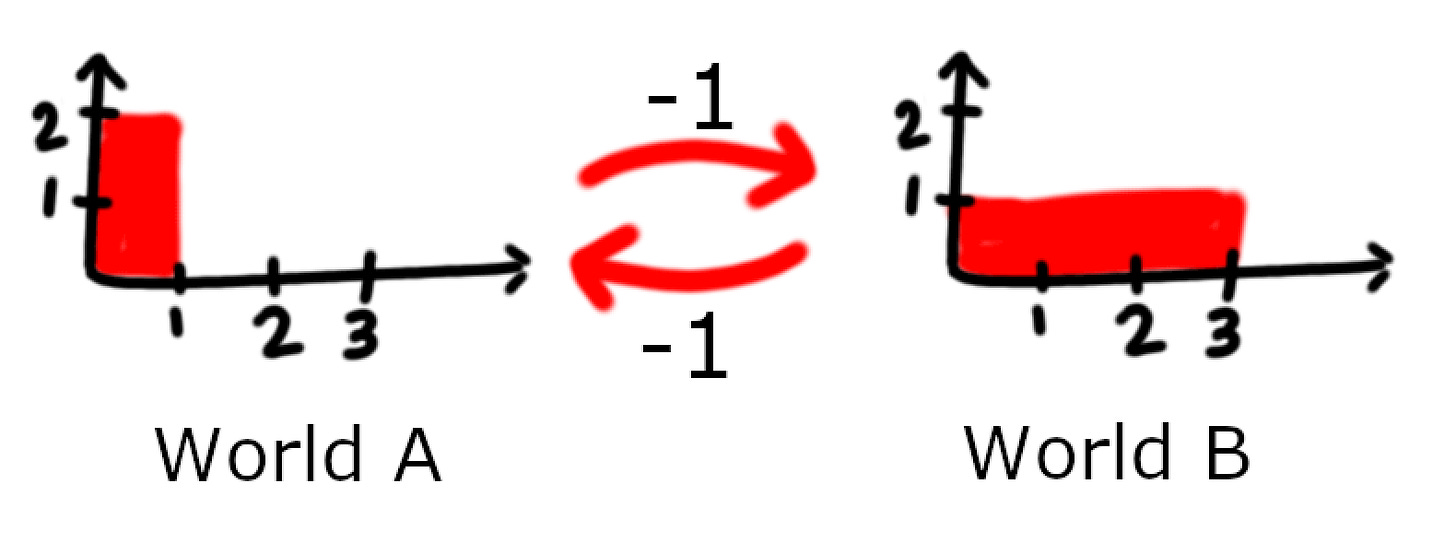

Let’s consider a hypothetical World A, which has ten billion lives of bliss:

This is World B, which has twenty billion lives of slightly-less-bliss compared to World A:

Which is better: World A or World B? The most obvious thing to do is to just add up how happy everyone is (which is the same as taking the area of the bar) and whichever world has the bigger total is better.

This approach of evaluating worlds by taking the sum of everyone’s happiness is called total utilitarianism.

And according to total utilitarianism, World B is better than World A because the bar has a greater area.

Now consider World C, which has ten more billion people and an even lower level of happiness slightly, so that the total is greater than Wold A and World B.

Repeat this process of increasing the total by adding people and reducing the happiness until we get to World Z.1

We would end up with an enormous number of people with lives that have only slightly positive wellbeing, and we would have to conclude that [according to total utilitarianism] that world is better than the world we started with, with ten billion lives of bliss. That is, we have arrived at the Repugnant Conclusion.

If it’s not obvious why this is “repugnant”, Resident Contrarian put it well:

This leads to the argument that it’s not only good but morally demanded that we try to overpopulate the world as much as possible, right up to the point where life is so bad for everyone that the average person is almost-but-not-quite suicidal.

The fallout from Parfit drawing these simple diagrams is that there is now a new field of philosophy called “population ethics”, in which philosophers write essays arguing back and forth about whether to accept the repugnant conclusion.

But that’s just perspective

I don’t find this particularly compelling.

“Only slightly positive wellbeing” and “almost-but-not-quite suicidal” are a matter of perspective. The people living in World Z look “only slightly positive” compared to the people in World A. But the people in World A look “only slightly positive” when compared to a world where everyone is 1000x happier than in World A.

But we can tweak Parfit’s argument (which was parroted by MacAskill in WWOTF), to make it a little stronger:

The arbitrarily repugnant conclusion

There's no reason we have to stop at World Z. We can keep going forever2, and converge towards infinitely many people with literally zero happiness.

Formally, if we take an infinite series of worlds world1, world2, world3, …, where world i contains 3^i people with a happiness level of 2^-i, then each world is better than the previous one by 50%. But in the limit at world∞, each person has zero happiness. (If you know real analysis you should be able to prove this pretty easily.)

This isn’t quite the same thing as saying that the best possible world is one with infinitely many people who have zero happiness. But it’s close. It’s like a curious cat who wants to get as close to touching the water as possible, but doesn’t actually want to touch it.

I find this much weirder than the original idea that World Z is better than World A.

Does any of this even make sense?

Quick side-note: in the set up for the repugnant conclusion, it was just assumed that you can put a single number on how happy any given person is. There’s a sense in which this obviously not true. Happiness is a complex multi-faceted experience. It might be possible to put a meaningful number on happiness using something like von-Neumann Morgenstern construction. But then there’s no way to know if my happiness level 7 is the same as your happiness level 7, or if we’re using totally different scales. This is the interpersonal utility comparison problem, and as far as I know, no one is trying very hard to solve it.

The people who argue about the repugnant conclusion don’t seem to think any of this is an issue.

“Proof” of the Repugnant Conclusion

MacAskill:

The Repugnant Conclusion is certainly unintuitive. Does this mean that we should automatically reject the total view? I don’t think so. […] Though the Repugnant Conclusion is unintuitive, it turns out that it follows from three other premises that I would regard as close to indisputable.

The three premises are:

If you make all existing people happier and create new happy people, then you make the world better.

If World X and World Y have the same population, but people are on average happier in World X, then World X is better than World Y.

World betterness is transitive. That is,

World X is better than World Y, and

World Y is better than World Z, then

World X is better than World Z

These three “close to indisputable” entail the repugnant conclusion. So is the repugnant conclusion inevitable.

I don’t think so. I think there’s a fallacious equivocation here that.

But first, we first need to understand…

Person-affecting utilitarianism

An alternative to total utilitarianism is person-affecting utilitarianism. Philosopher Jan Narveson put it this way:

We are in favour of making people happy, but neutral about making happy people.

That is, in person-affecting utilitarianism, you can’t increase the value of the world by creating new people who are happy. You have to make the people who already exist happier.

Person-affecting utilitarianism is fairly intuitive to me. Let’s say you create a happy person called Bob. Bob might be glad he exists, and he might want to continue existing. But before he existed he didn’t want to start existing. So the act of Bob-creation was:

Neutral before Bob existed.

Good after Bob existed.

There’s an interesting asymmetry here. Going from a world where Bob doesn’t exist to one where Bob does exist is not good. But going from a world where Bob does exist to one where he doesn’t exist is bad3.

Consider three possible worlds4

In World A, Bob doesn’t exist.

In World B, Bob exists and is happy about it.

In World C, Bob exists and is neutral about it. He’s not happy but he’s not miserable.

We can draw the value changes going from each world to each other world (in some arbitrary “happiness units”):

The key thing to note here is that each world does not have one unique value associated with it. The value of being in a certain world depends on the path you took to get to that world.

If you go back and forth between World A and World B, then you’ll accumulate a bigger and bigger negative utility value. Which makes sense. Repeatedly bringing someone into existence who enjoys life and wants to continue living, and then making them not exist anymore seems… bad.

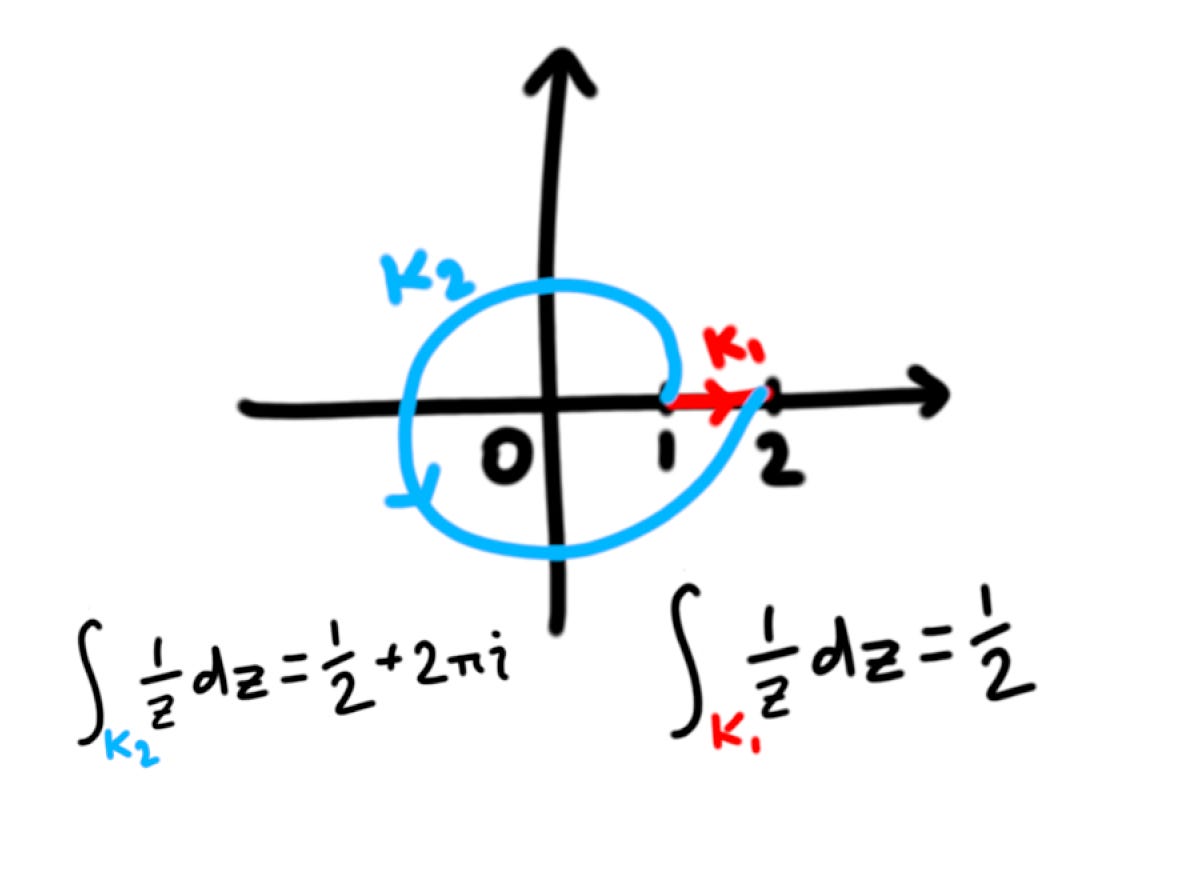

It’s like how in complex analysis the value of an integral from A to B depends on the contour.

Another way of thinking about it is that utility/value is no longer a property of worlds. It’s a property of paths.

Mathematically, you go from thinking of utility as a mapping from worlds to real numbers, to a mapping from sets of worlds to complete weighted directed graphs.

Repugnant Graph Theory

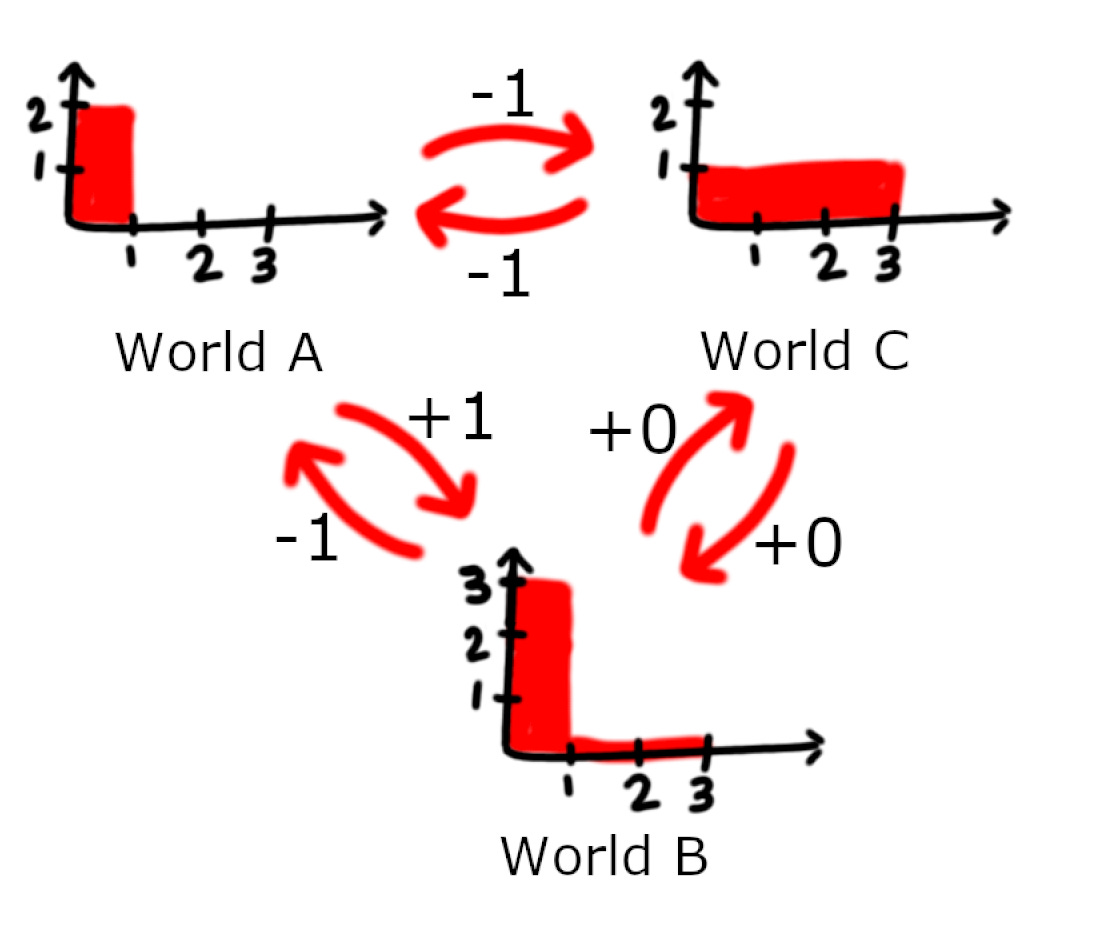

With total utilitarianism, if we move between:

World A where there’s one person with 2 units of happiness, and

World B where there' are 3 people with 1 unit of happiness.

We are either adding or removing one util like so:

Nice and balanced.

But here’s the value change under person-affecting utilitarianism:

Going from World A to World B reduces the happiness of one person (-1 value), and creates two new happy people (+0 value).

Going from World B to World A destroys two happy people (-2 value) and adds one unit of happiness to one person (+1 value)

Both worlds are worse than each other! (With respect to their own population.)

Or, we can add an extra step like in the “proof” of the repugnant conclusion

Now if you go from World A to World C via World B, then you gain one utility point. But if you go from World A to World C directly, you lose one utility point.

Weird stuff.

Person-Affecting Transitivity

Here are the axioms again for the “proof” of the repugnant conclusion:

If you make all existing people happier and create new happy people, then you make the world better.

If World X and World Y have the same population, but people are on average happier in World X, then World X is better than World Y.

World betterness is transitive. That is, if

World X is better than World Y, and

World Y is better than World Z, then

World X is better than World Z

The first two axioms still make sense in person-affecting affecting utilitarianism. But transitivity now looks kinda weird. There is no universal sense in which one world is better than another world because it depends on who already exists. It’s entirely possible that if we move from World A to World B to World C, then we might end wanting to go back to World A.

There are still two things we could mean by transitivity:

If A > B (with respect to the current population) and B > C (with respect to the current population), then A > C (with respect to the current population).

If there is a positive-utility pathway to get from A to B, and a positive pathway to get from B to C, then there exists a positive pathway to get from A to C.

The first kind of transitivity doesn’t entail the repugnant conclusion because if we hold the population fixed then any moves which reduces the average happiness is a downgrade.

The second kind of transitivity does entail the repugnant conclusion, but only if you physically instantiate every world between World A to World Z. Jumping straight from World A to World Z is a downgrade.

So there’s a bit more nuance to this situation than simply “transitivity must be true, and therefore the repugnant conclusion is inescapable”.

Conclusion

I’m don’t think worrying about population ethics is very productive. Unless we can figure out how to do interpersonal utility comparisons this is all moot.

I think person-affecting utilitarianism lines up with my intuitions a little better than total utilitarianism. However,

Person-affecting utilitarianism requires graph theory and is a real pain to use.

I’m a moral anti-realist and I see ethical theories as tools to automate moral decision making. The complexity of person-affecting utilitarianism makes it difficult to use, so overall I’m inclined to prefer total utilitarianism, which is very simple to use.

In WWOTF, MacAskill also goes into personal identity arguments for total utilitarianism over person-affecting utilitarianism. I find these reasonably convincing, which is another point in favour of total utilitarianism.

The status of the repugnant conclusion in person-affecting utilitarianism is complicated.

From the perspective of World A, going from World A to World Z is bad.

From the perspective of World Z, going from World A to World Z is good.

You can strategically go from World A to World Z via a series of intermediate worlds, such each move either looks good or neutral.

The status of the repugnant conclusion in total utilitarianism is also a little murky.

“Only slightly positive wellbeing” is subjective.

Does it matter that there’s a value-ascending series of worlds which converges towards infinite people with zero happiness?

And at this point the whole argument seems so muddled that I’m inclined to just throw up my hands and say we don’t know whether it’s good to make happy people or not.

The repugnant conclusion doesn’t actually seem to clarify anything.

The upshot for longtermism:

If you already think that future people matter morally, then making sure the longterm future goes well is very morally important.

If you already think that morality only applies to people who already exist, then I don’t think I can change your mind.

This quote comes from What We Owe The Future.

You might run into physical limits at some point, where everyone has exactly one “Planck util”. But then you can continue to shrink the expected utility with a Schrödinger’s Cat-type setup, where everyone only gets one Planck util if a certain number of uranium atoms decay. Otherwise they get zero utils.

This is line of thinking is inspired by David Benetar’s book Better Never to Have Been.

This setup is inspired by Rohin Shah’s article Person-affecting intuitions can often be money pumped.